The AI adoption wave has hit enterprise IT with the force of a mandate. Every board wants an AI strategy. Every business unit is experimenting with ChatGPT. Every vendor is adding "AI-powered" to their product name.

But behind the excitement, a critical question remains largely unaddressed: who controls the AI, and where does your data go when you use it?

The Control Problem

When an organization deploys Microsoft Copilot, the AI processing happens on Microsoft's infrastructure, using Microsoft's models, under Microsoft's terms of service. The same applies to Google Gemini, Amazon Bedrock, and most SaaS AI offerings.

For general productivity use — summarizing emails, drafting documents, generating presentations — this is perfectly fine. The data sensitivity is low, and the vendor's terms are acceptable.

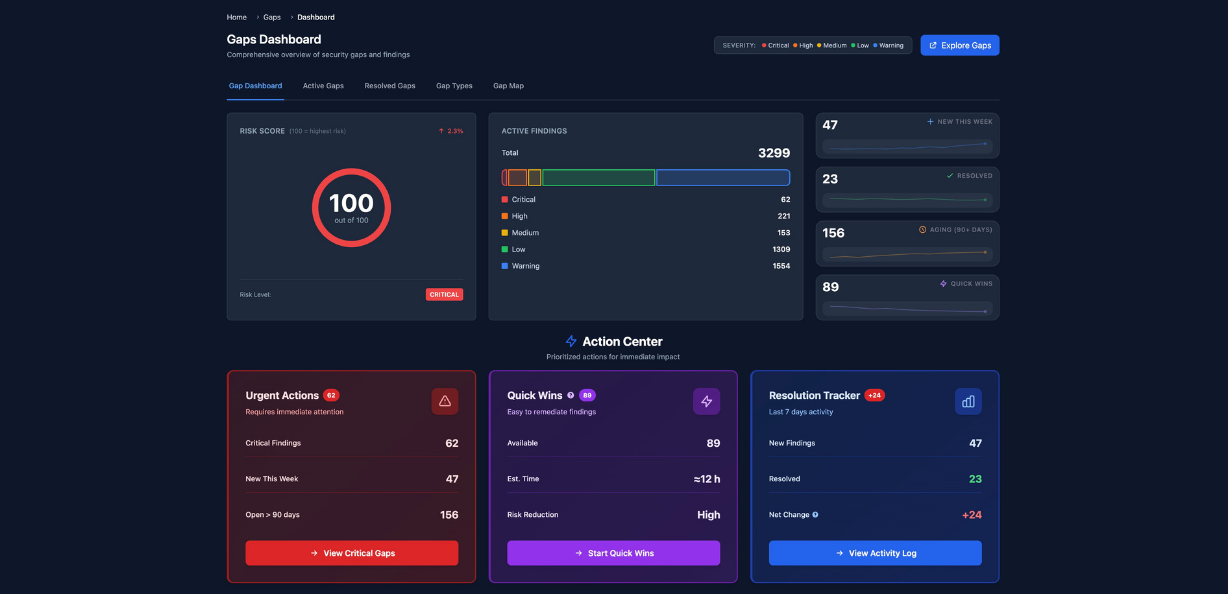

But for three categories of use, the control problem becomes critical:

Category 1 — Sensitive data. Legal documents, financial models, patient records, defense communications, intellectual property. When this data is processed by a US-controlled AI model, it's subject to the same jurisdictional risks as any other cloud workload.

Category 2 — Strategic intelligence. Competitive analysis, M&A due diligence, pricing models, R&D data. Feeding this into a shared AI model — even one that claims data isolation — creates risk that most organizations haven't fully evaluated.

Category 3 — Regulated environments. Healthcare (HIPAA), government (sovereignty requirements), defense (classified), financial services (compliance). These environments often have explicit restrictions on where data can be processed and by whom.

The Three Levels of AI Deployment

We structure AI deployment across three levels of increasing control:

Level 1 — Copilot M365 Baseline. Microsoft's standard AI offering within the M365 tenant. Good for individual productivity. Limited customization. Data stays within the Microsoft ecosystem but under Microsoft's terms.

Level 2 — Agents on Your Data (Copilot Studio / AI Foundry). Custom AI agents connected to your SharePoint, databases, and business systems. More control over what the AI accesses. Still within the Microsoft ecosystem but with tenant-level isolation.

Level 3 — Sovereign AI Deployment. Models deployed on sovereign infrastructure — Scaleway, OVH, Infomaniak, or on-premise. Using open models like Mistral, or sovereign platforms like Delos. Full control over the model, the data, and the processing environment. No extraterritorial jurisdiction.

The Honest Assessment

Level 3 is not for everyone. Sovereign AI deployment requires GPU infrastructure, model management expertise, and ongoing operational investment. For most organizations, Level 1 or Level 2 delivers 80% of the value at 20% of the cost.

But for organizations handling sensitive data, operating in regulated environments, or making strategic decisions based on AI analysis — Level 3 isn't a luxury. It's a requirement.

The Architecture Decision

The question isn't "AI or no AI." It's "which AI workloads need sovereign deployment, and which ones are fine in the standard Microsoft ecosystem?" Sound familiar? It's the same boundary question we ask about every other technology layer.

At Novarque, we design that boundary — for cloud, for security, and for AI.

We don't sell a stack. We design the right architecture.